Where AI Coding Is Actually Heading

Parallel agents, self-healing deploys, and autonomous development pipelines — based on patterns already working

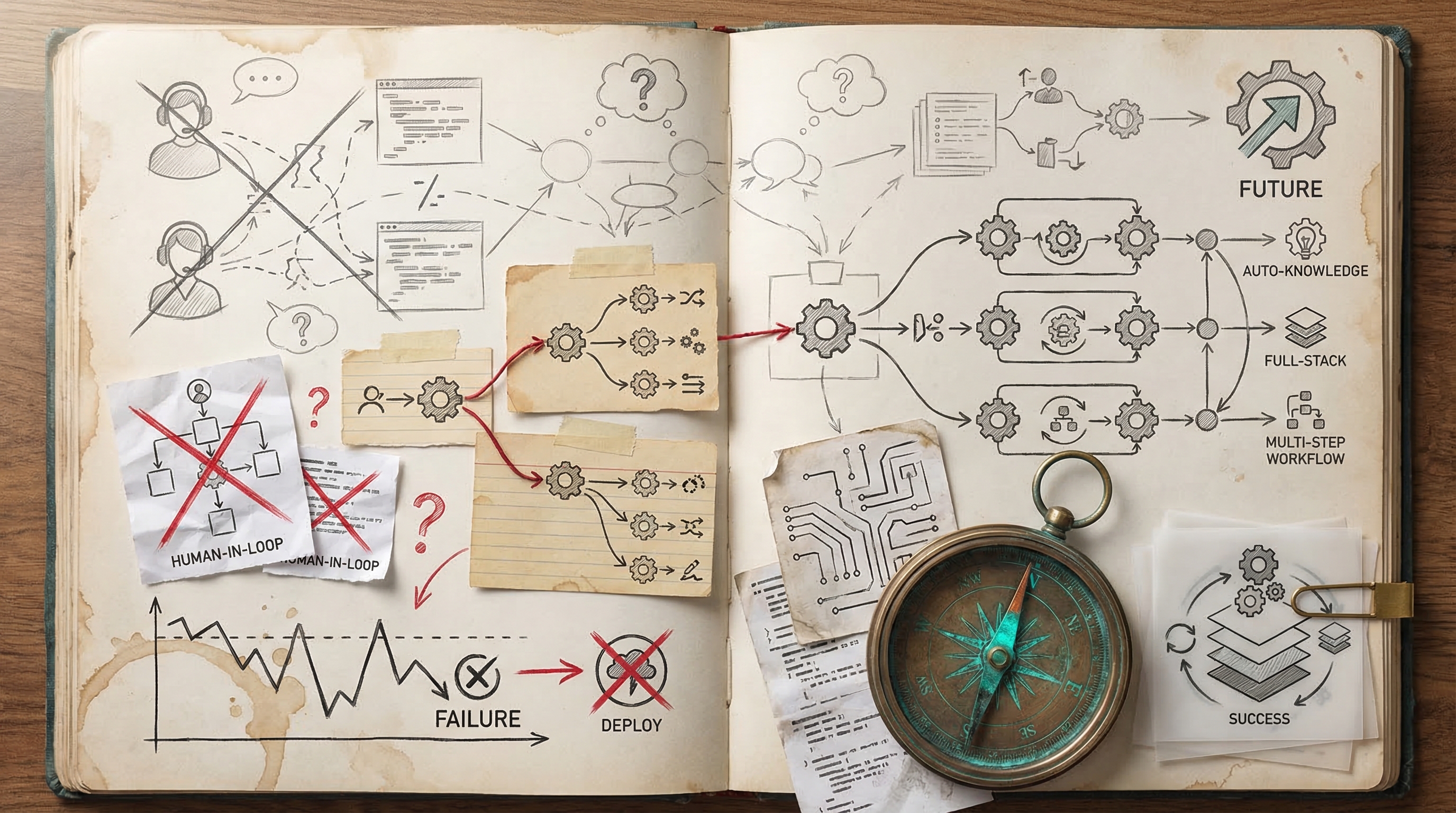

My 924 hours across 338 sessions reveal a power user pushing Claude Code toward autonomous knowledge management, full-stack deployment, and multi-step workflows — with clear opportunities to eliminate the friction patterns (wrong approach 54x, buggy code 56x) through more structured autonomous pipelines.

The workflows I use today would have seemed impossible a year ago. Here's what I think becomes possible in the next year — based not on speculation, but on patterns I'm already seeing work at small scale.

Autonomous Obsidian Vault Agent Pipeline

My heaviest workflow — processing meeting transcripts, extracting projects, updating people notes, and maintaining hub files — spans dozens of sessions and is my most consistent source of friction when Claude misplaces items or misunderstands which files to update. A dedicated autonomous agent could watch for new transcripts, parse them against my vault schema, route action items to the correct project files, and self-validate by cross-referencing existing notes before committing. With parallel sub-agents handling entity extraction, project routing, and cross-link validation simultaneously, my entire post-meeting capture could happen in one command with zero corrections.

How to Start Experimenting

Use Claude Code's Task tool for parallel sub-agents (I'm already using Task/TaskUpdate 1,429 times) combined with a CLAUDE.md that encodes my vault conventions, file naming rules, and routing logic for decisions vs action items vs project briefs.

Read CLAUDE.md and the vault structure under /vault. I'm pasting a meeting transcript below. Use parallel sub-agents to: (1) extract all people mentioned and update or create their person notes, (2) extract all action items and route each to its correct project file — NOT the meeting note, (3) extract all decisions and append them to the relevant project's decision log, (4) create the meeting note with only summary, attendees, and discussion points. Before writing any files, have a validation sub-agent check that every person reference matches an existing person note and every project reference matches an existing project file. Show me the routing plan and wait for my approval before writing. Here's the transcript:

[PASTE TRANSCRIPT]

Test-Driven Autonomous Feature Implementation

56 instances of buggy code and 54 wrong-approach attempts — including a full feature built against a nonexistent JSON file — show that Claude works best when it can validate against concrete specifications before committing to an approach. An autonomous test-first workflow would have Claude write failing tests from my requirements, then iterate implementation against those tests in a loop until green, catching wrong assumptions (like the report.html vs JSON mistake) before I ever see broken code. This transforms Claude from 'implement then debug with human' to 'autonomously iterate until proven correct.'

How to Start Experimenting

Leverage Claude Code's Bash tool to run my test suite in a loop. Combine with the existing code review sub-agent pattern I've already used (architecture audit with 8 parallel sub-agents) to validate before PR creation.

I want to implement the following feature: [DESCRIBE FEATURE]. Before writing any implementation code, do this autonomously:

1. Read the existing codebase to understand current patterns, data formats, and API shapes — list every assumption you're making about inputs/outputs

2. Write comprehensive test cases that encode these assumptions and the feature requirements

3. Run the tests to confirm they fail for the right reasons

4. Implement the feature iteratively — after each significant change, run the full test suite and fix failures before proceeding

5. Once all tests pass, run the existing test suite to check for regressions

6. Run a code review sub-agent to check for style consistency and TypeScript errors

7. Only after all checks pass, create the PR with a summary of what was built and what was validated

Do not ask me questions — if you're unsure about a data format or API shape, read the actual source files to verify rather than assuming.

Parallel Deploy-and-Verify Release Automation

With 25 deployment sessions and friction from Azure quota failures, CORS/auth issues, Vercel build-time env var bugs, and post-deploy debugging passes, my release process is ripe for autonomous orchestration. A parallel agent pipeline could handle build verification, environment validation, infrastructure provisioning, deployment, and post-deploy smoke testing as independent sub-agents — catching issues like missing API keys at build time or incorrect CORS origins before they hit production. My Stripe Connect session alone had a production bug that could have been caught by an automated post-deploy verification agent.

How to Start Experimenting

Chain Claude Code's Task tool with Bash to create a multi-stage deploy pipeline: pre-flight checks (env vars, quotas, config), build, deploy, and automated smoke tests — all as parallel sub-agents that report back with pass/fail status.

I need to deploy the current branch to production. Run this as an autonomous multi-agent pipeline:

Sub-agent 1 — Pre-flight: Check that all required environment variables exist in the deployment config, verify API keys are not referenced at build time (lazy init only), and confirm the build succeeds locally with `npm run build`.

Sub-agent 2 — Infrastructure: Verify the target environment has sufficient quotas/resources, check CORS origins include the production domain, and validate any database migrations are ready.

Sub-agent 3 — Deploy: Execute the deployment only after sub-agents 1 and 2 both pass. Capture the full deploy log.

Sub-agent 4 — Smoke test: After deploy completes, hit the key endpoints (list them from the route files), verify responses are 200, check that auth flows work, and confirm no console errors on the main pages.

Report all results in a single summary. If any sub-agent fails, stop the pipeline and show me exactly what failed and a proposed fix. Do not proceed to the next stage on failure.

Autonomous Bug-Fix-to-PR Pipeline

With 42 bug fix sessions and frequent friction from wrong approaches and buggy code shipping, I can set up Claude to autonomously detect a bug, write a failing test, iterate on the fix until tests pass, run a code review agent, and open a PR — all without human intervention. Parallel sub-agents can handle the fix, review, and migration simultaneously, reducing my median bug fix cycle from interactive back-and-forth to a single prompt-and-wait.

How to Start Experimenting

Use Claude Code's Task tool for parallel sub-agents and a CLAUDE.md with my project's test/lint/build commands so Claude can iterate autonomously against my CI checks.

I need you to fix this bug autonomously. Here's the issue: [DESCRIBE BUG]. Your workflow: 1) Use a sub-agent to search the codebase and identify the root cause across all relevant files. 2) Write a failing test that reproduces the bug. 3) Implement the fix, iterating until ALL tests pass (run: npm test). 4) Run the linter (npm run lint) and fix any issues. 5) Run a full build (npm run build) and verify it succeeds. 6) Use a separate sub-agent to do a code review of your changes, checking for edge cases, type safety, and regressions. 7) Address any P1/P2 findings from the review. 8) Create a git branch, commit with a conventional commit message, and push a PR with a description that explains the root cause and fix. Do NOT ask me questions — make reasonable decisions and document your assumptions in the PR description.

Parallel Agent Architecture Audits

My one architecture audit session spawned 8 parallel sub-agents and scored my codebase at 59% — imagine running this continuously. I can orchestrate parallel agents that each audit a different dimension (security, performance, accessibility, type safety, dead code, dependency health) and produce a unified prioritized remediation backlog. With my TypeScript-heavy codebase (4,283 tool uses), specialized agents can catch issues like the server/client component bugs and non-immutable migration expressions that caused production friction.

How to Start Experimenting

Use Claude Code's Task tool to spawn specialized sub-agents in parallel, each with a focused audit scope, then have a coordinator agent merge and prioritize findings.

Run a comprehensive parallel architecture audit of this codebase. Spawn these sub-agents simultaneously using the Task tool:

1. **Security Agent**: Scan for exposed secrets, unsafe data in client bundles, auth bypass risks, and XSS vectors. Check that server-only code never imports into client components.

2. **Type Safety Agent**: Find all `any` types, missing null checks, untyped API responses, and unsafe type assertions. Score coverage.

3. **Performance Agent**: Identify unoptimized queries, missing indexes, N+1 patterns, unawaited promises (especially in serverless), and bundle size issues.

4. **Dead Code Agent**: Find unused exports, unreachable routes, orphaned components, and stale migrations.

5. **Next.js Patterns Agent**: Check for server/client component boundary violations, incorrect use of cookies/headers in server components, and prerendering compatibility.

6. **API & Integration Agent**: Audit all external integrations (Stripe, Supabase, Resend, Calendly) for error handling, retry logic, and graceful degradation.

After all agents complete, synthesize findings into a single prioritized remediation plan with P0/P1/P2 ratings and estimated effort. Output as a markdown file at docs/architecture-audit-YYYY-MM-DD.md.

Self-Healing Deployment with Automated Rollback

With 25 deployment sessions and recurring friction from build failures, missing env vars, and production-only errors (cookie deletion in Server Components, unawaited inserts on serverless), I can build an autonomous deploy agent that pushes to preview, runs smoke tests, validates environment configuration, and either promotes to production or rolls back with a detailed failure report. This eliminates the pattern of shipping, discovering breakage, then opening a hotfix session.

How to Start Experimenting

Combine Claude Code with my Vercel CLI and Supabase CLI to create a deployment checklist agent that validates each step before proceeding, using Bash tool calls to run real deployment commands.

Act as my autonomous deployment agent. Execute this full deployment pipeline:

1. **Pre-flight checks**: Run `npm run build` locally. Run `npm test`. Run `npm run lint`. If ANY fail, stop and fix the issues automatically, iterating until all pass.

2. **Environment audit**: Read the codebase for all `process.env.*` references. Compare against `.env.example` and the Vercel environment (run `vercel env ls`). Flag any variables referenced in code but missing from production config. List them and halt if critical ones are missing.

3. **Migration safety**: Check for any pending Supabase migrations. Validate that migration SQL uses only immutable functions in indexes and constraints. Run `supabase db push --dry-run` and report the output.

4. **Server/Client boundary check**: Grep for any server-only imports (cookies(), headers(), server actions) that might be imported into 'use client' files. Flag violations.

5. **Deploy to preview**: Run `vercel --confirm` to create a preview deployment. Capture the preview URL.

6. **Smoke test**: Use curl/fetch to hit the preview URL's key routes (/, /dashboard, /api/health if it exists). Verify 200 responses and no error strings in the HTML.

7. **Decision**: If all checks pass, report 'READY FOR PRODUCTION' with a summary. If any check fails, provide a detailed failure report with the specific fix needed.

Document everything in a deployment log at docs/deploy-log-YYYY-MM-DD.md.

Parallel Sub-Agent Architecture Audits and Fixes

My agent-native architecture audit already demonstrated 8 parallel sub-agents scoring a codebase at 59% — but it stopped at diagnosis. Imagine triggering a full audit-to-fix pipeline where parallel agents each own a problem domain (type safety, API contracts, dead code, performance), implement fixes independently against test suites, and converge into a single PR with a confidence-scored changelog. With 53 buggy_code and 47 wrong_approach friction events, autonomous agents that iterate against tsc --noEmit and my test runner before surfacing results could eliminate the majority of my shipped bugs.

How to Start Experimenting

Use Claude Code's sub-agent orchestration via TaskCreate (I already have 513 TaskCreate invocations) combined with Bash tool loops that run type-checking and tests after each fix pass.

Run a full codebase health audit using parallel sub-agents. Create one sub-agent for each category: (1) TypeScript strict type errors, (2) unused exports and dead code, (3) async/await correctness (especially unawaited promises in serverless contexts), (4) Next.js server/client component boundary violations, (5) environment variable usage safety. Each sub-agent should: scan the codebase, identify all issues, implement fixes, and run `npx tsc --noEmit` after each fix to verify no regressions. After all sub-agents complete, merge all changes, run the full build, and create a single PR with a categorized summary of every fix and its confidence level.

Self-Healing Deploy Pipeline with Rollback

I had 21 deployment sessions with recurring friction: prerendering failures from useSearchParams, server/client component boundary violations, missing env vars, and Vercel CLI project linking issues. An autonomous deploy agent could run a pre-deploy checklist (validate env vars exist, check server/client boundaries, test SSR paths), deploy to a preview URL, run smoke tests against it using my chrome MCP tool, and either promote to production or auto-rollback with a diagnostic report. This turns my current deploy-debug-hotfix cycle into a single command.

How to Start Experimenting

Chain Claude Code's Bash tool for build/deploy commands with the mcp__claude-in-chrome__computer tool (already used 560 times) for post-deploy visual and functional verification against the preview URL.

Implement an autonomous deploy pipeline for this Next.js project. Step 1: Pre-deploy validation — run `npx tsc --noEmit`, check that every `process.env.` reference has a corresponding entry in .env.example, scan for useSearchParams/cookies usage in server components, and verify no client-only imports in server components. Step 2: Deploy to Vercel preview with `vercel --no-wait` and capture the preview URL. Step 3: Wait for deployment to be ready, then use the browser tool to visit the preview URL and check: homepage loads without errors, all navigation links resolve, no console errors in critical paths (dashboard, onboarding, settings). Step 4: If all checks pass, promote with `vercel --prod`. If any check fails, output a detailed diagnostic report with the exact failure, affected files, and a proposed fix. Do not promote on failure.

Full Feature Lifecycle in One Prompt

My most successful sessions already chain planning → implementation → build verification → code review → PR creation (like the Calendly connector: 12 files, 1,352 lines, one session). But my friction data shows 47 wrong_approach and 13 misunderstood_request events, often from underspecified intent. An autonomous feature agent that starts with a structured requirements phase, generates a test harness first, implements against those tests iteratively, runs my code review agent, addresses findings, and pushes a ready-to-merge PR would compress my typical 2-3 session feature cycle into a single autonomous run — turning my 82 fully_achieved sessions into 120+.

How to Start Experimenting

Leverage Claude Code's multi-file editing strength (98 successful multi-file changes) with a test-first workflow that uses Bash to run tests after each implementation phase, self-correcting until green.

I want to implement a new feature: [DESCRIBE FEATURE]. Follow this autonomous lifecycle: Phase 1 — REQUIREMENTS: Ask me up to 3 clarifying questions before proceeding. Do not skip this. Phase 2 — TEST HARNESS: Write integration and unit tests that define the expected behavior. Run them to confirm they fail for the right reasons. Phase 3 — IMPLEMENTATION: Build the feature across all necessary files (API routes, components, types, migrations). After each file group, run `npx tsc --noEmit` and fix any type errors before continuing. Phase 4 — GREEN TESTS: Run the test suite. If tests fail, analyze the failure, fix the implementation (not the tests), and re-run. Loop until all tests pass. Phase 5 — CODE REVIEW: Review your own changes for security issues (exposed secrets, missing auth checks, SQL injection), performance (N+1 queries, missing indexes), and Next.js best practices (server/client boundaries, proper data fetching). Fix any P0/P1 findings. Phase 6 — PR: Create a well-structured PR with a description covering what changed, why, testing done, and any migration steps. Push and output the PR URL.

The Bigger Picture

Right now, most people use Claude Code for single-task, single-session work: "fix this bug," "add this feature," "write this test." That's like using a spreadsheet as a calculator — technically correct but dramatically underutilizing the tool.

The future is compound workflows: chains of autonomous agents that handle entire development lifecycles — from planning through implementation through testing through deployment through monitoring. Not in theory. I've already seen pieces of this work.

The trajectory: We're moving from "AI writes code I review" to "AI runs a development pipeline I occasionally steer." The timeline for this transition is shorter than most people think.

The constraint isn't model capability — it's context management. The models can already do the work. The challenge is giving them enough context to do it reliably without human intervention at every step. Solving that is what turns AI coding assistants into AI development teams.