A field guide to the operating model

Build the system that builds the code.

The useful question is not whether AI makes engineering faster. It does. The harder question is whether that speed leaves behind a stronger system or a more fragile one. Compound engineering is an attempt to make each cycle improve the next one.

The idea comes primarily from Kieran Klaassen and Dan Shipper at Every. The examples here also draw from early work in the Monti mono repo, where the model became concrete through plans, review findings, and hardening docs.

Why this matters

Velocity is not the hard part. Preventing entropy is.

Most AI-assisted workflows focus on output. Compound engineering focuses on residue. What remains after the feature ships? Was a plan written that another agent could execute without guessing? Did review uncover a class of problem that can now be prevented? Did the team save the lesson anywhere a future session could retrieve it?

That is why the philosophy is more demanding than “use AI to code faster.” Faster coding by itself is cheap. It can recreate the same old mistakes with more impressive throughput. The compounding part is what makes the system improve rather than merely accelerate.

Kieran Klaassen’s cleanest line is still the best one: a human would remember; the AI would not. The whole system exists to close that gap. Plans should remember the repo’s patterns. Reviews should remember the failure modes you care about. Compound artifacts should remember the bugs and tradeoffs that would otherwise vanish into chat logs and pull request comments.

The loop

Plan → Work → Review → Compound is simple. The emphasis is not.

The loop is easy to memorize and easy to flatten into a slogan. The interesting part is where the time and rigor go. Planning is not a preamble. Review is not etiquette. Compound is not an optional archive step.

Brainstorm is upstream work for when the idea is still vague. Once the requirement is clear, planning should run without you. That is one of the sharpest practical ideas in the Every workflow: planning is where most of the thinking belongs.

Work becomes execution against a reviewed blueprint instead of improvisation. Review becomes multiple perspectives running in parallel instead of one person scanning a diff alone. Compound is the move that turns a solved problem into future leverage, and it should happen while the context is still fresh.

Plan

Turn fuzzy intent into a blueprint another agent can execute without guessing.

Work

Implement against that plan instead of negotiating the feature from scratch while coding.

Review

Use parallel scrutiny so humans can focus on intent, product fit, and risk tolerance.

Compound

Capture the lesson so the next cycle starts with stronger defaults and less re-learning.

Artifacts

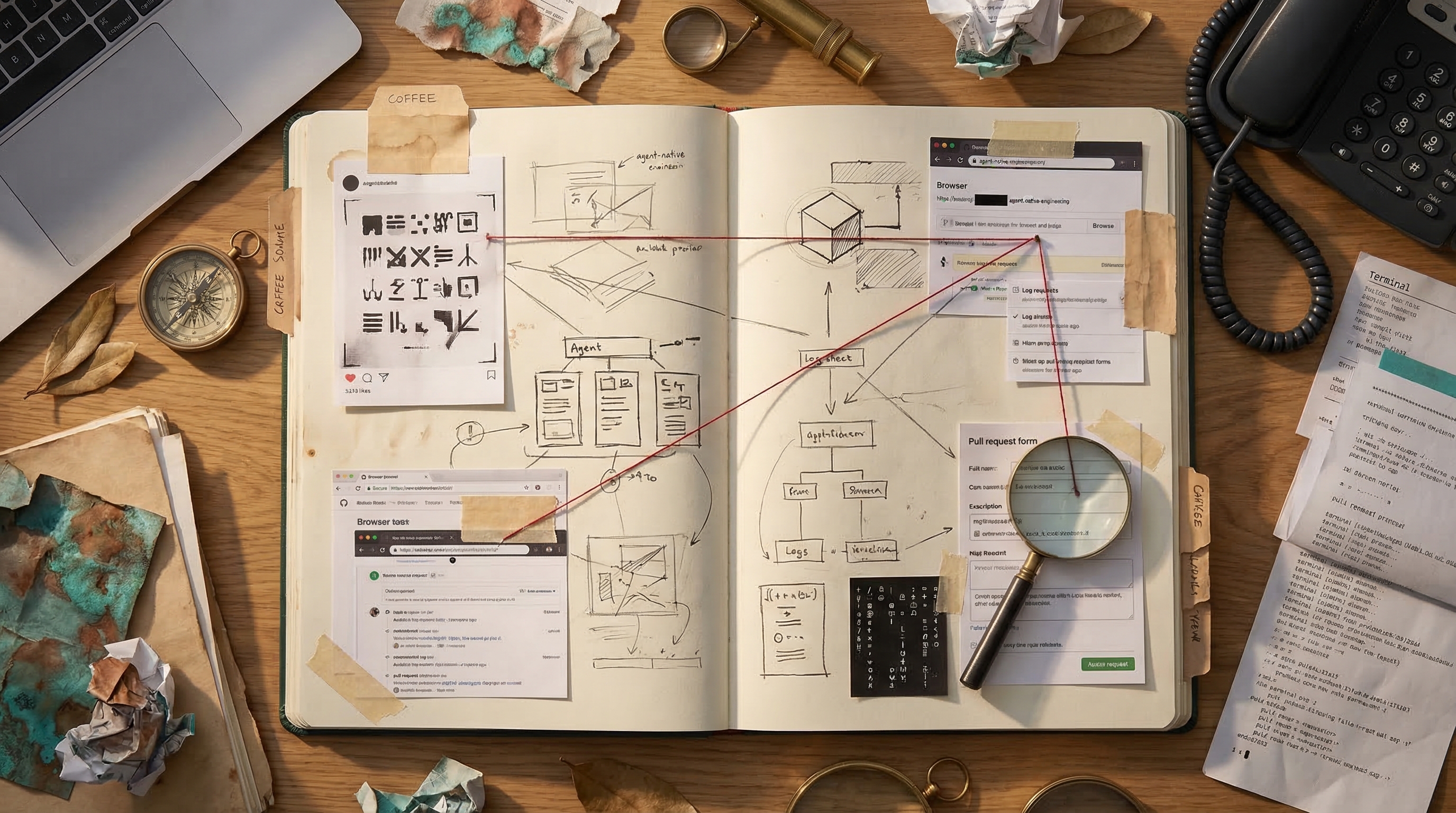

The workflow becomes persuasive when it leaves behind documents worth reusing.

A live demo should not just show an agent typing. It should show the residue the system leaves behind. Plans, review findings, and compound docs are what make the model feel operational instead of philosophical.

Artifact 01

/workflows:plan should produce a delivery sequence, not a pile of ideas.

The plan artifact should tell an implementing agent what to do, what order to do it in, and what to avoid touching yet. That is what turns planning into executable infrastructure rather than smart-sounding setup.

---

title: "feat: Deliver Paylocity time-entry reporting sync foundation"

type: feat

status: active

---

## Delivery decisions

- keep the workload in monti-platform

- limit week-one scope to time-entry pull + validation

- use Flex Consumption and prove dev before prod

Artifact 02

/workflows:review should turn vague unease into prioritized findings.

Review is where trust stops being psychological and becomes infrastructural. The useful output is not “looks good to me.” It is a list of concrete problems, clear severity, and an obvious next move.

P1 must fix

[ ] global Azure names would collide across environments

(bootstrap review)

P2 should fix

[ ] auth boundary fails open at the platform layer

(security + platform review)

[ ] deep links lose destination after sign-in

(auth flow review)

Artifact 03

/workflows:compound is where a painful thread becomes reusable infrastructure.

The compound artifact is the strongest distinction between AI-assisted engineering and compound engineering. The feature ships once. The learning should ship forever.

---

module: Paylocity Time Entry Sync Runtime

tags: [paylocity, open-punch, reporting, testing]

severity: medium

---

## Durable fixes

- allow RelativeEnd to be null for true open punches

- map missing segment ends without inventing a closed shift

- expose OpenPunchCount in the reporting view

## Prevention

- validate live payload shapes before hardening the schema

One real arc

Monti gave the loop a cleaner story: compare approaches, sequence the delivery, catch real risk, and keep the lesson.

The brainstorm documents do something simple but important: they compare paths before implementation. The CI foundation brainstorm preserved the choice between minimal guardrails, phased monorepo CI, and full compliance upfront. The later Paylocity reporting brainstorm preserved a second shape of decision: standalone repo, monorepo MVP, or near-real-time scope from day one.

From there, the Paylocity reporting-sync plan turned scope, runtime, repo placement, and deployment ownership into a staged execution sequence. It did not just say "build reporting." It constrained the first slice to time-entry pull, validation, sync observability, and dev-before-prod proof.

The valuable part is what happened next. Real payload

validation then exposed a contract mismatch around open punches.

That did not stay trapped in debugging. It became a reusable

solution document: nullable end times for true open punches,

mapper rules that do not fabricate closed shifts, stable

fallback keys, and reporting views that expose

OpenPunchCount honestly.

The threshold

Compound engineering starts at Stage 3, because that is where the plan becomes the source of truth.

Most teams stall at Stage 2 because line-by-line supervision feels safer. It is also the point where the human becomes the bottleneck. Stage 3 is where plans harden enough that the human can stop hovering over every line and start evaluating whether the output matches the plan, works in reality, and respects the team’s standards.

That is why the jump from Stage 2 to Stage 3 feels uncomfortable. The workflow changes, but so does the emotional model of control. The right move is not to jump straight to a swarm of autonomous agents. It is to build enough trust in planning and review that the system becomes easier to rely on than your old habits.

More good artifacts

The point is not one perfect example. The point is that good plans and reusable solutions start to accumulate.

Plan backup

docs/plans/2026-03-24-feat-power-bi-exposure-for-paylocity-reporting-plan.md

This one is strong because it turns “just connect Power BI” into a deliberate contract: curated SQL views, import mode first, hourly refresh after sync, and explicit ownership of the semantic model.

Solution backup

docs/solutions/integration-issues/paylocity-rbac-bootstrap-needs-principal-ids-not-entra-lookups-20260324.md

A clean infrastructure lesson: stop forcing Terraform to do an unnecessary Entra lookup when Azure RBAC only needs the principal object ID.

Debugging backup

docs/solutions/integration-issues/paylocity-flex-consumption-zero-functions-after-successful-deploy-20260323.md

This is a good reminder that compound engineering is not prompt theater. It captured a real platform failure, an app rewrite, a Terraform fix, and a regression test.

Agent-native systems

If the developer can do it, the agent should eventually be able to do it too.

Every missing capability becomes manual labor somebody else has to absorb. Agent-native is not a luxury. It is the operational path out of invisible bottlenecks.

If the developer can run the app, inspect logs, open a pull request, check review comments, or verify behavior in a browser, the agent should gradually inherit those same capabilities. Otherwise you end up with a lopsided system where the model can generate code but not finish the job.

The goal is not “full autonomy” as a slogan. The goal is to remove the unnecessary handoffs that keep forcing a human back into the loop for purely mechanical reasons.

Development environment

- Run the app locally

- Run tests, linters, and type checks

- Run migrations and seed data

Git operations

- Create branches or worktrees

- Make commits and push

- Create PRs and read comments

Debugging

- View local logs

- View production logs read-only

- Take screenshots and inspect requests

- Access error tracking and monitoring

How to start

The first compounding win should be boring, local, and permanent.

The wrong way to adopt compound engineering is to imitate the most advanced version of the system on day one. The right way is to remove one repeated tax forever and then repeat.

Start with your instruction file. Tighten AGENTS.md

or CLAUDE.md until it reflects your actual

standards, not vague aspirations. Then pick one review tax you

pay every week and make the system own it. Then save one solved

problem into a searchable document.

The Every team has a useful forcing function for this: the $100 rule. When something fails that should have been prevented, feel the sting once and spend it on the permanent fix: a test, a rule, a monitor, or an eval. That is how a failure turns into a better system instead of another recurring tax on attention.

- Tighten

AGENTS.mdorCLAUDE.mdwith your actual conventions and non-negotiables. - Turn one recurring review pain into a reviewer, check, or skill.

- Save one solved problem into a searchable solution doc.

- Add one new agent capability that removes a manual bottleneck.

- Repeat until the system becomes easier to trust than your old habits.